Apple HTTP Live Streaming (HLS)

Hi Guys,

I have already discussed briefly about different streaming technologies, different adaptive streaming implementations, on-premise solutions and cloud solutions in my last blog Kick start with Video Streaming.

Now I am going to discuss HTTP Live Streaming, which is one of the popular implementation of adaptive streaming. HLS is HTTP based media streaming communication protocol. This is supported by most of the clients/devices. You can find the detailed list here.

HLS supported formats

Protocol specification doesn’t limit any audio/video formats. Current Apple implementation supports the below formats:

- Video Formats

- H.264 Baseline Level 3.0, Baseline Level 3.1, Main Level 3.1, and High Profile Level 4.1

- Audio Formats

- HE-AAC or AAC-LC up to 48 kHz, stereo audio

- MP3 (MPEG-1 Audio Layer 3) 8 kHz to 48 kHz, stereo audio

- AC-3

Encoders

Current Apple implementation supports encoders that produce MPEG-2 Transport Streams containing H.264 video and AAC audio (HE-AAC or AAC-LC)

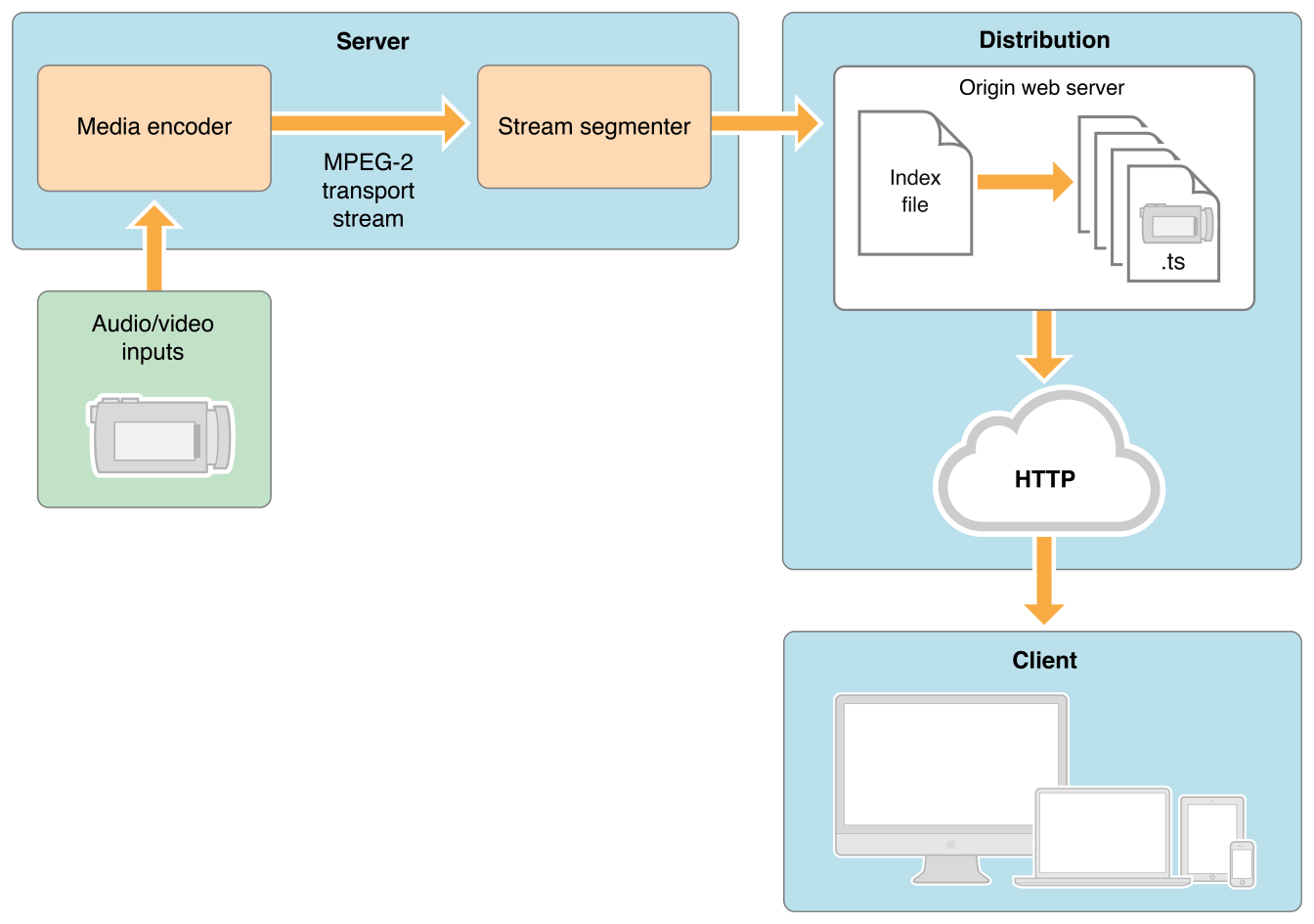

I am not going to explain all the theoretical stuff here, but just an architectural overview

- Audio/Video input files encoded to HLS supported formats, through media encoders.

- Stream segmentation takes place.

- Index (manifest) file containing key aligned segments (chunks in form of ts files) info generated .

- Index file served by web servers along with requested chunks to client.

There are many media servers that do these things for you. You can find the list of such media servers here.

But I am not going to explain about any such media server here. It is better described in their user manuals.

I am going to tell you about the recipe of the secret sauce behind these media servers that create HLS streams from your video files.

So be ready to get dirty in the mud 😉

Required S/W, files and tools

- A sample video file

- FFMPEG (An Open Source Video converter tool, download and install from here)

- Any text editing tool (Ex: Notepad)

- Any web server to serve the HLS stream (Ex: Tomcat, IIS, NGINX, Jetty etc)

- Any HLS supported media player to test the HLS stream (Ex: VLC, JWPlayer, QuickTime Player etc)

Steps

- Create a folder named mediaContent and put the sample file (Ex: sample.mp4) inside

- Inside folder mediaContent create 3 more folders named 240p, 360p and 480p

- Open a terminal and go inside the folder mediaContent and execute the commands below:

cd 480p ffmpeg -i ../sample.mp4 -c:a aac -strict experimental -c:v libx264 -s 854x480 -aspect 16:9 -f hls -hls_list_size 1000000 -hls_time 2 480_out.m3u8 cd .. cd 360p ffmpeg -i ../sample.mp4 -c:a aac -strict experimental -c:v libx264 -s 640x360 -aspect 16:9 -f hls -hls_list_size 1000000 -hls_time 2 360_out.m3u8 cd .. cd 240p ffmpeg -i ../sample.mp4 -c:a aac -strict experimental -c:v libx264 -s 426x240 -aspect 16:9 -f hls -hls_list_size 1000000 -hls_time 2 240_out.m3u8 cd ..

- Create a file inside mediaContent folder, named mediaFilePlaylist.m3u8 with the content below

#EXTM3U #EXT-X-STREAM-INF:PROGRAM-ID=1, BANDWIDTH=700000 240p/240_out.m3u8 #EXT-X-STREAM-INF:PROGRAM-ID=1, BANDWIDTH=1000000 360p/360_out.m3u8 #EXT-X-STREAM-INF:PROGRAM-ID=1, BANDWIDTH=2000000 480p/480_out.m3u8

and save the file

- If you want to play locally, open mediaFilePlaylist.m3u8 with media player (Ex: VLC player)

- To host the video on server host the folder mediaContent on web server, and play the file from remote location, Ex:

Great, now you have your own HLS media server.

Let me brief you the whole process

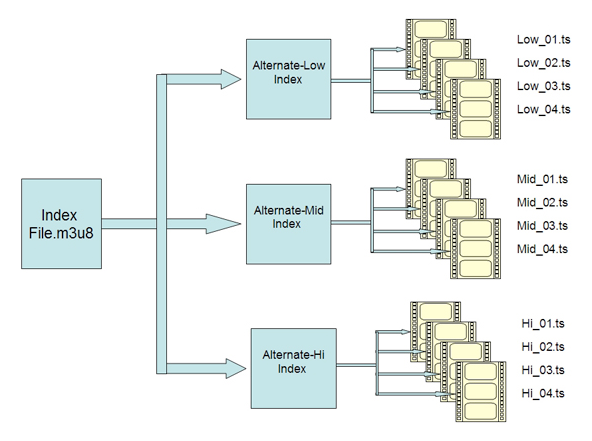

- Using FFMPEG tool you have transcoded the sample video in 3 qualities (240p, 360p and 480p)

- You created chunks (ts files) for each video and an index file (m3u8) containing segment info and stored in separate folders (240p, 360p and 480p) using FFMPEG tool

- You created a master index file (m3u8) that lists all three index files in which segments are key aligned.

- Hosted the content on any web server for remote access

- You have played the master index file from any media player.

- Media player will decide which stream to play, as per logic based on parameters like client computational capacity (CPU), internet bandwidth and memory utilization

HLS uses multiple encoded files with index files directing the player to different streams and chunks of audio/video data within those streams

If you don’t want to use any web server, you can directly upload the folder mediaContent on S3, and directly play the playlist filemediaFilePlaylist.m3u8 to get the adaptive stream remotely.

Ex: yourS3Bucket.s3.amazonaws.com/mediaContent/filemediaFilePlaylist.m3u8

Cheers!!!

EXCELLENT article! Simple and objective! I’m studding about HLS and how to make a SaaS to receive files and make streams. The other part is learn how to read a stream and create a file. And I need to understand live HLS too. Finally I need to calculate with cloud services AWS, Azure, Google,.. how much it could cost… A lot of work!

There are multiple vendors who provides such solutions on a very reasonable charges. You can start with Wowza cloud who charge you on the basis of data used only, and there will be no monthly or other fixed charges.