Docker Swarm on AWS

This blog post refers to a newly established concept in Docker Technology i.e. Docker Swarm. The Swarm can be used for clustering of more than one Docker engines. Using Swarm, Docker containers can be launched to any node in the cluster. It comprises of 2 logics based on which containers can be launched and managed on the cluster nodes:

1. Scheduling Algorithms

There are three algorithms based on which a node for container deployment is chosen. These are as listed below:

a) Random – In this technique, new containers randomly comes on any underlying nodes

b) Bin Packing – Under this algorithm, new instances comes on a single node in the cluster till all the resources of that node are depleted.

c) Spread – Currently, this is the default algorithm used in Docker Swarm. Using this algorithm, the containers launches on the node having least number of containers.

2. Filters

These are some more logics based on which node for the next container can be defined. Using these filters, cycle created by above algorithms can be over-ridden.

a) Affinity Filters: Using this filter technology, we can align upcoming containers on the basis of existing containers parameters e.g. container name, ID or any other parameter specific to a container.

b) Constraint Filters: Using these filters, we can tag our instances and on the basis of these tags, node for the next container can be defined.

c) Resource Filters: This one is used to filter containers on the basis of physical node’s resources. For example, if a container needs port 80 access of host machine, then we can schedule this container to that node on which host port 80 is still available.

STEP 1: Installing Docker Engine

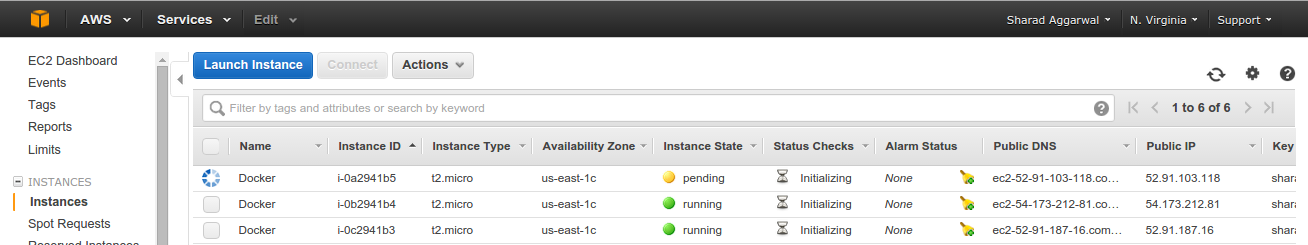

So, let’s start with a hands-on exercise on Docker Swarm. For this exercise, three AWS instances launched using ubuntu 14.04 AMI and then Docker Swarm environment will be built on these nodes. The IP’s of these nodes are as listed below:

Docker1 172.31.52.155 ip-172-31-52-155

Docker2 172.31.52.156 ip-172-31-52-156

Docker3 172.31.52.157 ip-172-31-52-157

Firstly, all the 3 nodes should have Docker Engine installed using below steps:

[js]# apt-get update

# apt-get install linux-image-generic-lts-trusty wget

# reboot

# wget -qO- https://get.docker.com/ | sh

[/js]

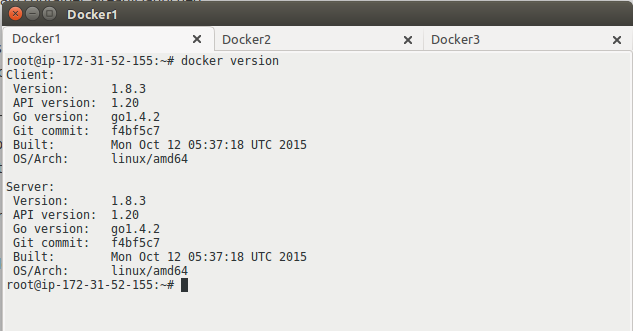

Note: Docker engine version should be same on all the nodes

STEP 2: Install Docker Swarm

In the above commands, we have done the kernel update to get the latest Docker version. It is important to reboot nodes so that the system can boot up with the new kernel. After Docker installation, to install Swarm, we need to install a few dependencies and we need to install “go” language version 1.4 or above. Swarm should be installed on all 3 nodes using command listed below:

[js]

# apt-get install binutils bison gcc make git

# bash < <(curl -s -S -L

# source ~/.bashrc

[/js]

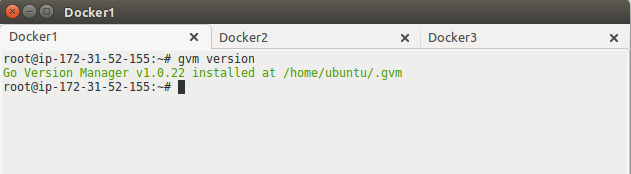

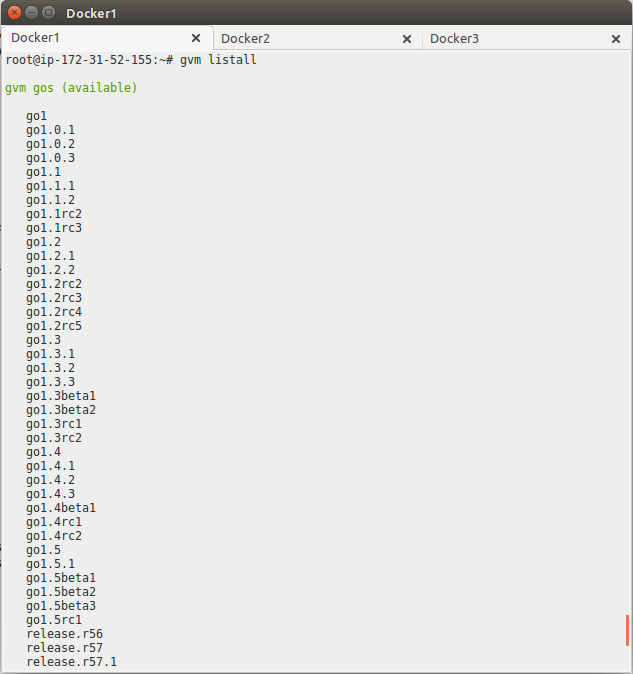

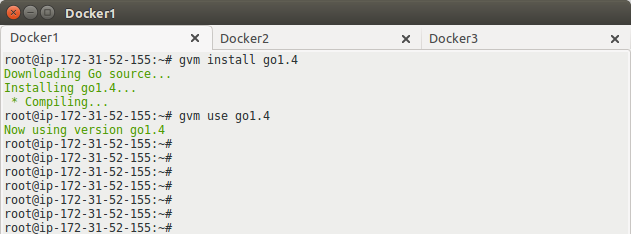

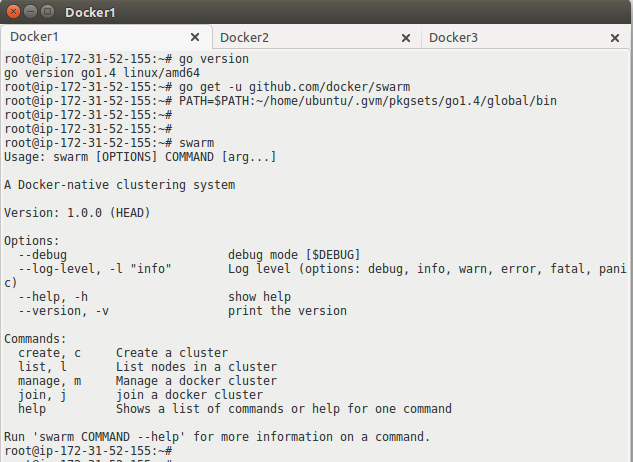

Now, install gvm (go version manager) and setup required version of “go” language and then install Swarm, set PATH in the environment variable. Commands to perform required operations are given below. These commands should run on all the 3 servers:

[js]

# gvm version

# gvm listall

# gvm install go1.4

# gvm use go1.4

# go version

# go get -u github.com/docker/swarm

# PATH=$PATH:~/home/ubuntu/.gvm/pkgsets/go1.4/global/bin

[/js]

The outputs of above commands should be as shown in below snaps. If you come across any errors, please check your steps again:

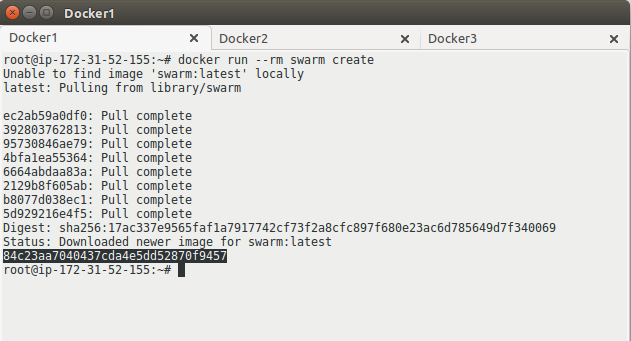

Till above steps, the Swarm is installed. Now, we need to launch an empty Swarm cluster using “Docker Swarm create” command on only 1 of the nodes (can be anyone of the 3 ). When it is launched, it will generate a unique token ID. Using that token ID, all the 3 nodes will be joined into this cluster. Steps to achieve this are given below:

STEP 3: Configuring Swarm Cluster

Below command should be run on only 1 node out of all 3, This command will generate token ID which will be used further to join nodes in the cluster:

[js]# docker run –rm swarm create[/js]

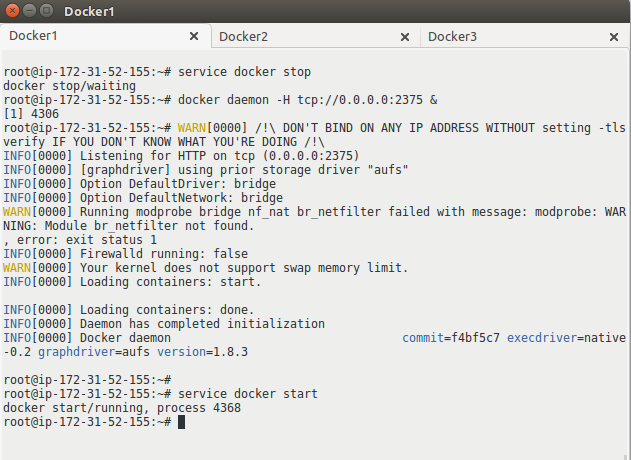

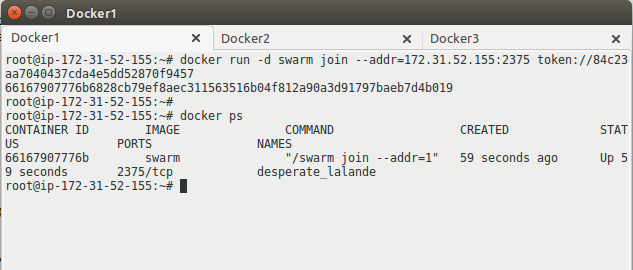

Below command has to be executed on all the 3 servers:

[js]# sudo service docker stop

# docker daemon -H tcp://0.0.0.0:2375 &

# service docker restart

# docker run -d swarm join –addr=<IP address>:2375 token://<token ID> &[/js]

Using below command on all the nodes, we can confirm that all the three nodes are now part of Swarm cluster:

[js]# docker run –rm swarm list token://<token ID>[/js]

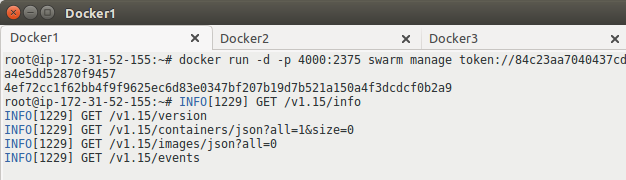

After successful join of all the 3 nodes in the cluster, one node has to be upgraded as manager using below command:

[js]# docker run -d -p 4000:2375 swarm manage token://<token ID>[/js]

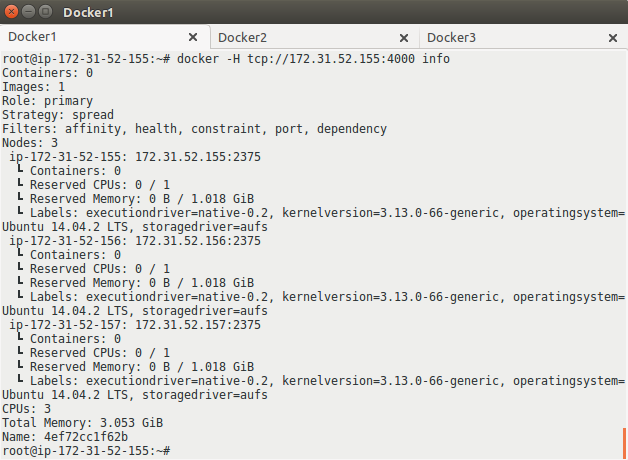

To list the details of cluster, following command can be used:

[js]# docker -Htcp://<node IP>:4000 info[/js]

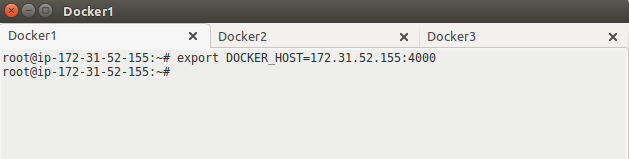

Now, DOCKER_HOST variable has to be exported on the all the 3 servers (including manager node) with the IP of manager node using command:

[js]# export DOCKER_HOST=<manager node IP>:4000[/js]

STEP 4: Launch Containers in Swarm Cluster

Now, Docker Swarm cluster is ready. New containers can be launched in the cluster from any of the nodes. Simply execute “docker run” command to launch containers. Let’s roll a few containers and check if they can be launched on any of the servers in Swarm cluster:

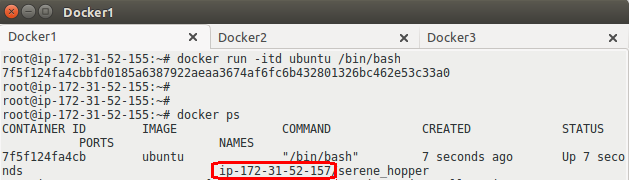

[js]# docker run -itd ubuntu /bin/bash[/js]

In the above screenshot, it can be clearly seen that although the container was launched at node Docker1, but this container is launched at Docker3 node (as per the algorithm used).

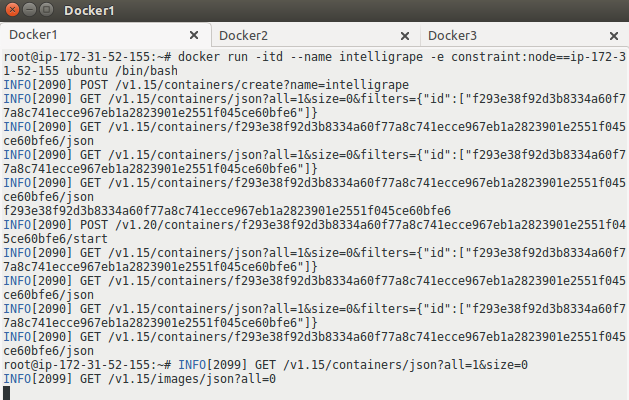

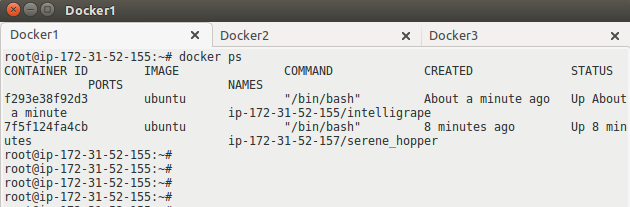

Similarly, containers can be launched as per constraint/affinity filters using commands given below:

[js]# docker run -itd –name intelligrape -e affinity:container==intelligrape ubuntu /bin/bash

# docker run -itd –name intelligrape -e constraint:node==ip-172-31-52-155 ubuntu /bin/bash[/js]

In the above screenshot, we have launched a container named “intelligrape” using constraint filter. This is verified below. Similarly, affinity and resource filters can also be used.

Very good blog on docker clustering.

Can we add docker machines on the go, I mean if the cluster resources are getting used up 100%, is it possible to automatically scale the cluster horizontally by integrating it with Autoscaling? …ust in case if we think it to use on production.