The endless scroll problem in OTT

Think about how many times you’ve opened your OTT app, scrolled for 15-20 minutes, and still couldn’t decide what to watch. It’s frustrating, right? Finding what works for you right now is more difficult than figuring out what’s accessible in today’s world of limitless entertainment. Whether on mobile, web, or smart TV apps, AI-driven personalization ensures your OTT experience is seamless across all platforms.

The appeal of AI-driven personalizing

“Show me thrillers and comedies in Hindi and English, released in the last 5 years, with ratings above 8” is an example of how you might open your over-the-top app.

Your home screen adjusts to show that for you in a matter of seconds. No endless scrolling. No digging through filters hidden in menus. Just one simple prompt and your app understands you. That’s the idea behind AI prompt-based home screen personalization. Powered by advanced language models and generative AI development services, OTT platforms can interpret complex user prompts in real time. Instead of a one-size-fits-all homepage, your OTT platform can now become a session-based personal theatre.

By leveraging AI-powered OTT development and smart TV app capabilities, platforms can turn metadata, ratings, and viewing patterns into instant, session-based recommendations.

Why this matters for viewers

- Faster discovery: You get what you want instantly, based on mood, language, genre, or even ratings

- More control: Your preferences aren’t locked forever, it’s temporary. Once you log out, your home screen goes back to default

- A natural interaction: Voice or text prompts make the app feel like you’re having a quick conversation with it

In short, the app adapts to you, not the other way around.

Why it matters to OTT companies

OTT platforms operating in the highly competitive media and entertainment solutions ecosystem constantly competing for the attention and loyalty of the users. There are a few reasons why a personalized AI-powered home screen is beneficial:

- More engagement: With less searching, viewers consume more content

- Better retention: When viewers have the ability to select content according to their mood, they churn less

- Upselling opportunities: Personalised questions allow the suggestion of high-end content, subscriptions, or even franchises that a user may not have found otherwise

- Strategic differentiation: AI-driven personalization positions platforms as innovative and user-centric, attracting and retaining high-value subscribers

- Revenue growth opportunities: Tailored suggestions can boost content consumption, upsell premium subscriptions, and enhance overall monetization

The objective is to create a more thoughtful and sensitive way to interact with entertainment platforms. OTT apps are changed by AI from static content shelves into dynamic living experiences.

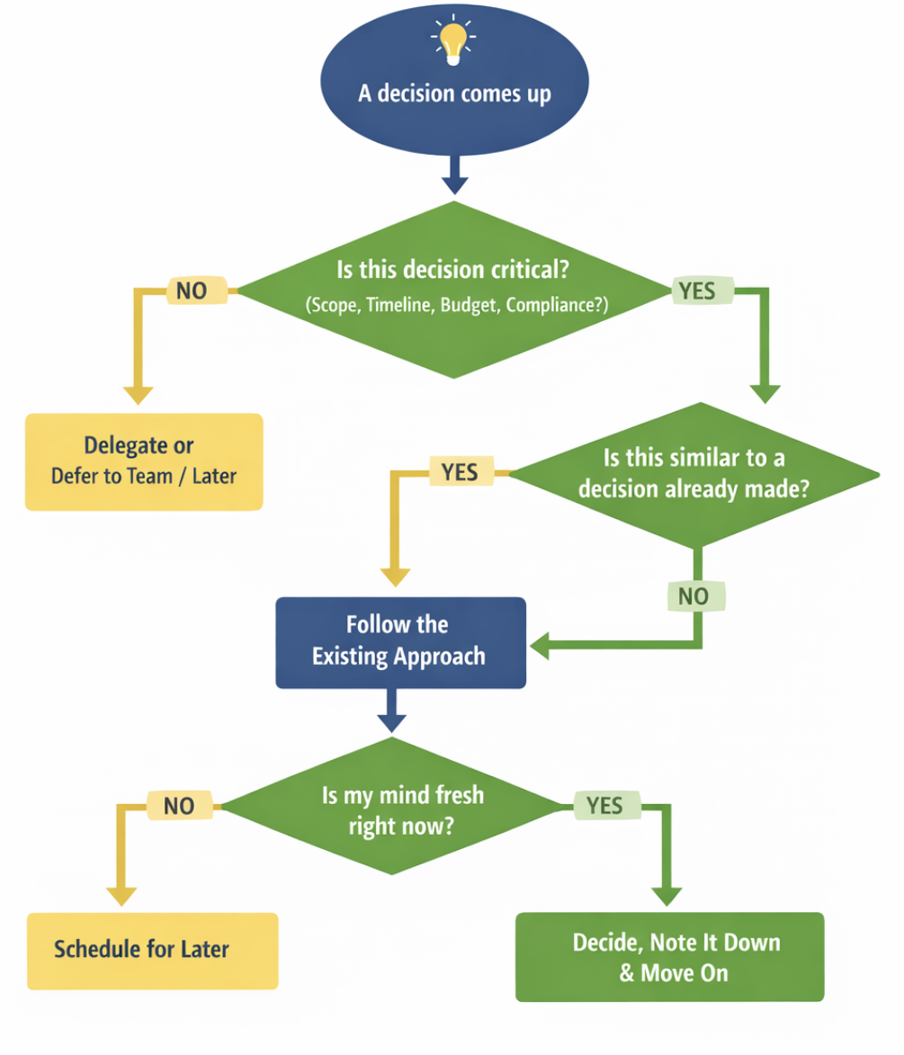

How can this be achieved?

The concept of personalizing your OTT home screen with a simple prompt might seem like something out of science fiction, but it is very possible. When you type or speak a prompt like “Show me thrillers in Hindi released in the last 5 years”, the app’s AI quickly understands your request. It breaks it down into simple filters such as language, genre, and release year, then scans the OTT library to find matching titles.

Within seconds, your home screen updates to show exactly what you asked for, no extra scrolling or searching. And since it’s session-based, once you log out, your home screen resets to its original default.

Service providers specializing in OTT development and media services, like TO THE NEW, help platforms integrate these AI models efficiently, ensuring personalized experiences on every device, from mobile to smart TVs.

The road ahead

In the near future, you might be able to just say: “Show me a quick watch under 30 minutes before bed.” And your home screen will adapt instantly. That’s the future AI is unlocking, one where your OTT app feels less like a library, and more like a companion that just gets you.

With AI, your OTT app transforms from a static library into a dynamic companion and companies that adopt these technologies early can set industry benchmarks in engagement, retention, and monetization.