Boosting Code Quality and Collaboration with CodeRabbit

Introduction

In modern cloud engineering projects, speed and quality often clash. Teams want to ship features fast, but rushed reviews, inconsistent coding standards, and manual bottlenecks slow progress. That’s where CodeRabbit, an AI-driven code review tool, makes a difference.

This logo is used to represent Code Rabbit.

By automating repetitive review tasks and providing intelligent feedback, CodeRabbit helps engineers focus on higher-value work while giving managers confidence in consistent quality. Before introducing any AI tooling, our review process looked fairly typical for a DevOps-focused team. Developers would push changes to GitHub/GitLab and open a merge request. From there, reviewers would step in and manually go through the code. At first glance, this seems perfectly fine. But once the number of changes increased and multiple teams started contributing to the same repositories, a few pain points started becoming obvious.

The Pain Points That Drove Us Crazy

We realised we were losing hours to “nitpicking”. A huge chunk of our review time was spent pointing out the same small things over and over:

- Inconsistent variable naming (camelCase vs snake_case wars).

- Formatting differences that didn’t actually matter for logic.

- Random unused imports that someone forgot to prune.

It’s frustrating for everyone. As a reviewer, I’d find myself leaving five comments about style before I even looked at the actual architecture. By the time I got to the “real” code, I was already mentally drained. Then there’s the “queue fatigue”. We’ve all been there: A simple one-line fix sits in a merge request for six hours because the lead engineer is tied up in an incident response. The development cycle just grinds to a halt.

Inconsistent Review Standards

Another issue was inconsistency. Different engineers naturally focus on different aspects during reviews. Some reviewers are strict about formatting or naming conventions, while others care more about performance or maintainability.

This meant developers sometimes received completely different feedback depending on who reviewed their code.

Over time, that inconsistency started creating small inefficiencies in the workflow.

Merge Requests Sitting in Queues

During busy development cycles, merge requests occasionally sat in the review queue longer than expected. This isn’t unusual — reviewers are often juggling operational work, incident response, infrastructure changes, and feature development all at once. But when a simple change waits several hours (or longer) for feedback, the development cycle slows down.

Reviewer Fatigue

Manual code review requires attention to detail.

When reviewers are forced to examine everything from formatting to architecture, it becomes easy to miss bigger issues simply because the review process becomes mentally exhausting.

That’s where the idea of introducing an AI assistant for code reviews started to make sense.

We Needed a Better Way to Review Code

In modern cloud engineering, we’re constantly caught in a tug-of-war between speed and quality. My team wanted to ship features yesterday, but rushed reviews and inconsistent standards usually end up biting us later.

Before we brought CodeRabbit into the mix, our review process was all manual, as we had to manually review the code and approve the changes. A dev would push a change to GitLab, open a merge request, and then we’d wait for him to push the changes we suggested so that we could again review them.

At first glance, it worked. But as our repositories grew and more teams started jumping into the same codebase, the cracks started to show. Honestly, it became a bottleneck.

Bringing in CodeRabbit:

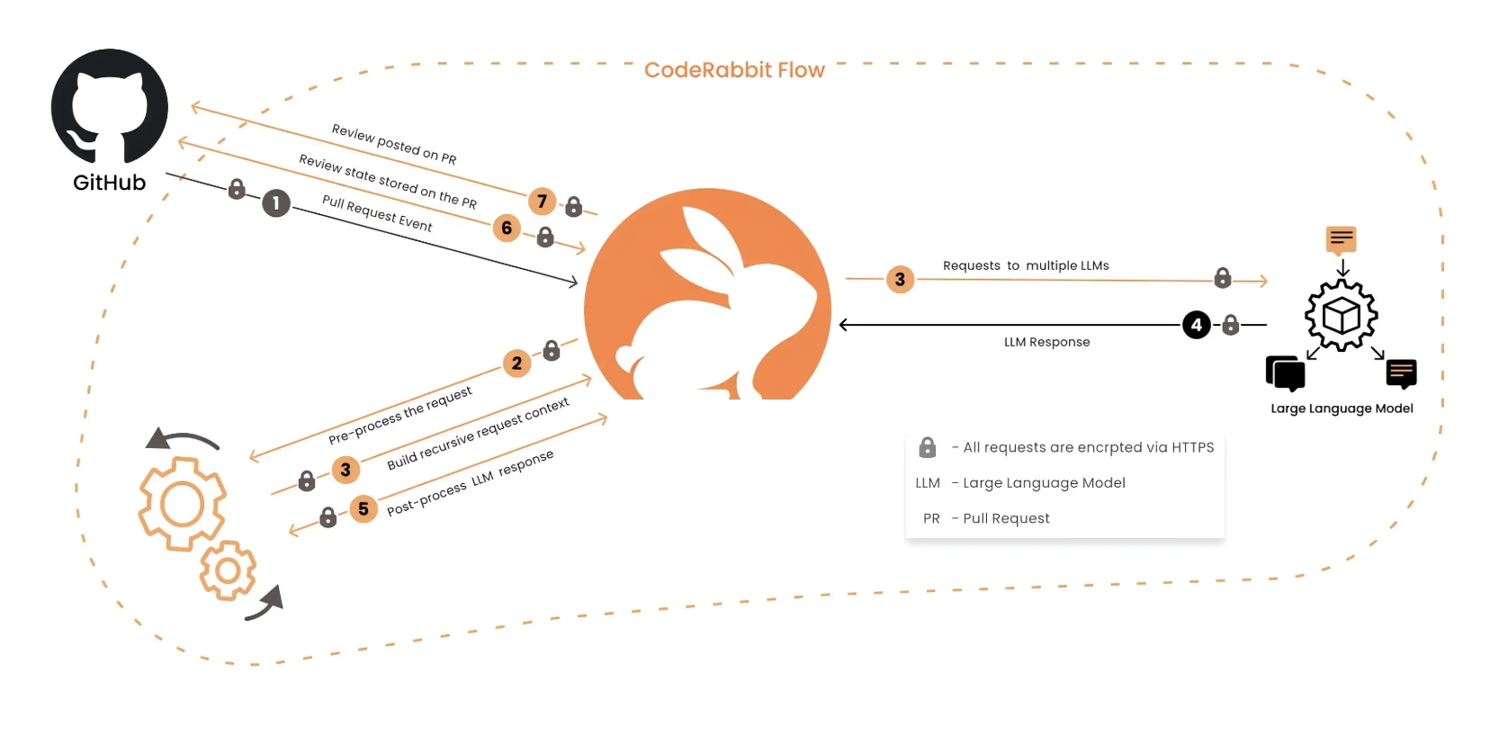

We decided to try CodeRabbit as an AI-powered “first-pass” reviewer. The setup was painless—it just lives in our workflow now. The idea wasn’t to replace our engineers, but to give them a shield. Now, when a dev opens a merge request, CodeRabbit hits it instantly. It catches the “noise” before a human even lays eyes on it. What it actually catches for us is surprisingly context-aware. It doesn’t just bark about rules; it explains why a pattern might be inefficient or where a potential logic bug is hiding. We’ve seen it flag everything from security concerns to resources that were defined but never used. Below is the workflow diagram how we have been using code rabbit in repositopry workflow.

Code rabbit in repository workflow

The key idea is simple: let automation handle the repetitive checks so human reviewers can focus on more meaningful discussions.

What CodeRabbit Actually Catches

After running it for a couple of weeks in our repositories, we started noticing patterns in the things CodeRabbit flagged. Most of the time it wasn’t huge architectural problems — it was the little things that quietly creep into a codebase over time.

For example:

- potential bugs in logic

- unused variables or resources

- security-related concerns

- inefficient patterns

- naming inconsistencies

- formatting problems

One interesting aspect is that the feedback is usually context-aware rather than purely rule-based. Instead of simply saying something is wrong, CodeRabbit often explains why a change might improve the code. For developers, this makes the suggestions easier to evaluate and decide if that suggestion needs to be implemented.

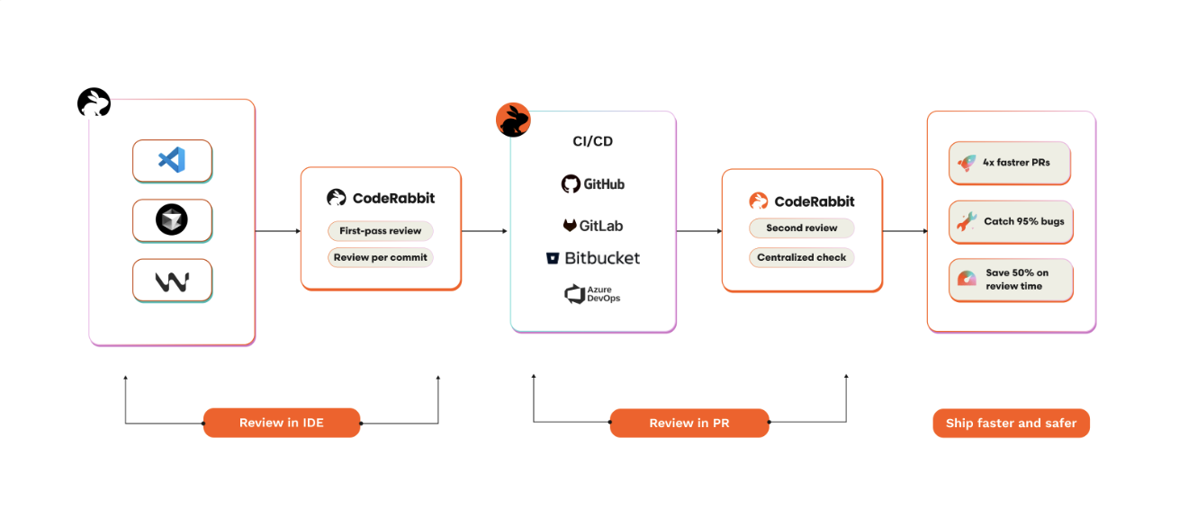

Where CodeRabbit Fits in the DevOps Workflow

From a DevOps perspective, the biggest advantage is that CodeRabbit integrates directly into the existing CI/CD workflow. No separate tooling or manual steps were required.

The typical flow now looks something like this:

Code Rabbit in DevOps Workflow

Because the automated feedback arrives quickly, developers can resolve most issues before the human review even begins.

What Changed After Adoption

The difference wasn’t dramatic overnight, but after a few weeks we started noticing some clear improvements.

Reviews Became More Focused

Human reviewers were no longer spending time commenting on small formatting issues. Most of those were already flagged earlier by CodeRabbit. As a result, review discussions shifted towards the following:

- architecture decisions

- infrastructure impact

- scalability considerations

- maintainability

Which is exactly what code reviews should ideally focus on. Below is the flow chart showing how CodeRabbit handles requests and integrate with LLM to provide a response or suggestion.

Code rabbit workflow

Faster Feedback for Developers

Another noticeable improvement was how quickly developers received feedback. Previously, developers sometimes had to wait for reviewers to become available. With CodeRabbit, initial suggestions appear within minutes of opening the merge request. This makes the development process feel much more responsive.

Less Review Fatigue

Reviewing large pull requests can be exhausting. By the time reviewers reach the end of a diff, they may already be mentally drained from checking small details. Automating the smaller checks helps reduce that fatigue and allows reviewers to stay focused on higher-level concerns.

Benefits Beyond Engineering

Interestingly, the benefits weren’t limited to developers.

From a team management perspective, a few additional improvements became visible over time. Release cycles became slightly faster because merge requests moved through the review pipeline more efficiently. The codebase also became more consistent because automated feedback applies the same standards to every pull request. For new engineers joining the team, the automated suggestions also act as a subtle learning tool — they quickly see the kinds of patterns and practices expected in the repository.

One thing we appreciated later was that CodeRabbit didn’t force us to change our existing tools. It works with the IDEs and CI/CD systems we were already using, which made adoption surprisingly smooth. It follows multi-layered approach – with reviews in both the IDE and the Git platform, so we can use our existing IDE and version control tool.

AI code reviews in IDE & Git platform

A Quick Reality Check:

Look, I’m not saying it’s perfect. Every now and then, CodeRabbit suggests something that makes us roll our eyes—maybe a “fix” for something we intentionally wrote a certain way for a specific edge case like we have some legacy code for which parameters are hardcoded and we don’t use variables for them, but CodeRabbit suggests we update those hardcoded values with variables (implementing that could break the entire pipeline). That’s where the human element is still vital. We use it as an assistant, not a boss. If it suggests something weird, we ignore it. The final “Merge” button is still ours to click.

Final Thoughts:

Code reviews are arguably the most valuable thing we do to keep our codebase from turning into a mess. But as we scale, we can’t just throw more manual labour at the problem. Integrating CodeRabbit helped us offload the repetitive “drudge work” of reviews. It didn’t just make our process faster; it made it more meaningful. CodeRabbit helped us offload many repetitive review tasks and made the process smoother for both developers and reviewers. We’re finally spending our time discussing design and reliability instead of syntax—and that’s exactly where we want to be.