AWS Security Re-Check

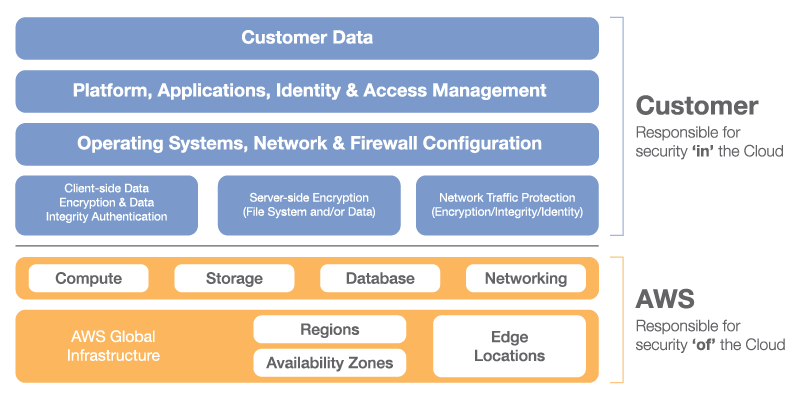

Security is of prime importance for any cloud vendor including AWS. AWS follows a Shared Responsibility Model for security. As the name Shared Responsibility Model suggests, security on AWS is not the sole responsibility of either AWS or the customer. It is a combined effort from both parties. The responsibility of AWS includes providing a global secure infrastructure and services, and the responsibility of the customer includes protecting the confidentiality, integrity, and availability of their data in the cloud.

The figure given below depicts the clear line of separation between the responsibilities:

Image courtesy: aws.amazon.com

While using any of the AWS services, customers must follow these security checks regularly to meet the critical security requirements of their data.

This blog describes what are the basic security checks that should be performed on every AWS account, why they should be performed, and a code snippet to automate all the described basic security checks.

Server Hosting

First of all, you need to identify where to host your servers. Your account may support EC2-VPC and EC2-Classic. If the account was created after 4th Dec 2013, it will only support EC2-VPC. VPC is always recommended due to its better and flexible security. In case you have servers running on Classic, you should plan to move them to VPC as early as possible. So, a check for the existence of any server on Classic layer becomes necessary if you are using the older account.

Here is the python snippet that checks for all instances in all the regions and lists details of instances if any existing in EC2-Classic:

[sourcecode language=”python”]

reservations = connection.get_all_instances()

for reservation in reservations:

for instance in reservation.instances:

detail=instance.__dict__

count=0

if detail[‘vpc_id’] == None:

count=count+1

if count!=0:

data = [”,instance.tags[‘Name’] , instance.id,”]

csvwriter.writerow(data)[/sourcecode]

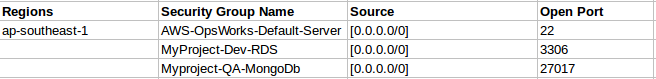

Firewall

Next is the Firewall layer. Once you have placed your servers in VPC, you must configure perimeter security for each of your instances. Firewall is called a Security Group in AWS. Here you define what all machines (or group of machines) can access a particular instance and on what port. It’s always recommended to keep it restricted to a specific IP. So, there should be another check to see if someone has not accidentally opened up any port for public (0.0.0.0/0).

[sourcecode language=”python”]

sg=connection.get_all_security_groups()

for group in sg:

for rule in group.rules:

if ‘0.0.0.0/0′ in str(rule.grants):

if rule.to_port == None:

rule.to_port=’ALL’

data = [”, group.name, rule.grants, rule.to_port ]

csvwriter.writerow(data)

[/sourcecode]

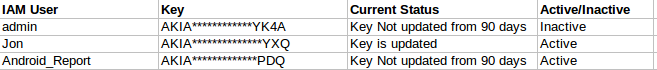

IAM Key Rotation

IAM keys can be used to access your account. Anyone with the open secret and access keys of your account has as many permissions to make changes to your account as you do. So, to avoid the risk of leak and misuse of access keys, as a security best practice, IAM Keys should be rotated in every 90 days.

[sourcecode language=”python”]

for value in range (0,len(val)):

if diff[value] > 90:

data=[ val[value],keys[value],’Key Not rotated from 90 days’,activekeys[value] ]

csvwriter.writerow(data)

else:

data=[ val[value],keys[value],’Key is rotated’,activekeys[value] ]

csvwriter.writerow(data)

[/sourcecode]

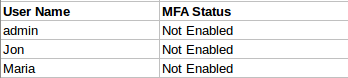

Multi-Factor Authentication

MFA (Multi-Factor Authentication) is an added layer of security, used when there is more than one method of authentication required. It adds security as users have to configure a device/number and enter a unique authentication code from their approved device or text message whenever they want to login to their account and access AWS services.

This restricts someone with your leaked root credentials to make any changes on your account.

[sourcecode language=”python”]

for user in range(0,no_of_users):

user_name=users[‘list_users_response’][‘list_users_result’][‘users’][user][‘user_name’]

mfa=connection.get_all_mfa_devices(user_name)

status=mfa[‘list_mfa_devices_response’][‘list_mfa_devices_result’][‘mfa_devices’]

if len(status)==0:

data=[user_name,’Not Enabled’]

csvwriter.writerow(data)

[/sourcecode]

User Activity Tracking

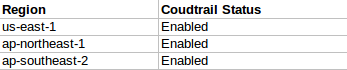

As the number of users increases in an organization, it becomes harder to track what changes each individual is making. As an owner, you might want to see what action was taken on what AWS resource and which user is accountable for it. AWS suggests having CloudTrail enabled for all regions in an account. Cloudtrail is used for API calls logging. It records all information about each API call made on your account in the S3 bucket. You can restrict the access of log information by managing IAM roles. By API calls logging, you can track changes made to your AWS resources.

[sourcecode language=”python”]

connection = boto.cloudtrail.connect_to_region(r_name)

c_trail=connection.describe_trails()

if not c_trail[‘trailList’]:

data=[r_name, "Not Enabled"]

csvwriter.writerow(data)

else:

data=[r_name, "Enabled"]

csvwriter.writerow(data)

[/sourcecode]

Data Security

Data security is a big challenge these days. Customers keep their critical or non-critical data on AWS Storage service (S3) inside the buckets available in all the regions. It becomes necessary to restrict these buckets with respect to the level of access for the users. Any bucket exposes various permissions like Read, Write, Read_ACP (view Access Control Permissions) and Full Control which are given to different users as per their requirement.

There should be a check to identify that only required permission is given to users. For example, if my application is supposed to read and write from the bucket, it does not need permissions for everyone but for a dedicated user. Also, if any bucket needs to have read only permission for everyone to access, it should be limited to ‘Read Only’ permission and never for Write or ACP related permissions. For more security, data can be encrypted before pushing it in the bucket.

[sourcecode language=”python”]

for bucket in buckets:

bucket_policy=bucket.get_acl()

user_policy=bucket_policy.acl

user_grants=user_policy.grants

no_of_user=len(user_grants)

data=[bucket.name.title(),bucket_policy.owner.display_name,’ ‘]

csvwriter.writerow(data)

for user in user_grants:

uname=user.display_name

user_permission=user.permission

if (uname==None):

u_uri=user.uri

uri_split=u_uri.split(‘/’)

uname=str(uri_split[-1])

data=[”,”,uname,user_permission ]

csvwriter.writerow(data)

[/sourcecode]

All above listed security checks should be performed on every AWS account on a monthly or quarterly basis to make you aware of any potential risks with your account. Also, to perform all these checks, you require a user with READ-ONLY permissions.

I have compiled all these snippets in a single script which does all above checks automatically and will give you an easy to understand output for each check. Download the script from here.

Once executed, the script will generate an Excel sheet combining all outputs and will appear something like:

I hope this blog would have given you the reason for doing all these checks and the way it can be done without much effort.

For running the script:

- Python should be in the system.

- boto should be installed and configured.

- To be able to see the output in the CSV format, an additional package xlwt needs to be installed

Simply run the script using python SecurityCheck.py.

Good write-up. Would be helpful.