Adaptive Bitrate streaming is a technique employed in video streaming which detects end user’s bandwidth and calibrates the video bitrate accordingly to guarantee the best viewing experience. This works by encoding source into streams of different bitrates and then each stream is fragmented into smaller multi-second chunks. A manifest file is used at client’s end to make it aware of available bitrates which in turn uses the information to adapt video bitrate to end user’s available resources.

HLS (HTTP Live Streaming) is one of the most widely used ABS protocols and is developed by Apple for its devices. In this article we’ll describe HLS protocol and in the process we’ll encode input video to 400K 600K and 1000k bitrates using AWS Elastic Transcoder. Along with Elastic Trancoder we will also use AWS services like S3 to store input and output of Transcoding process and CloudFront to stream video to end user in fast and resource efficient way.

The basic steps that we would use for implementing HLS are mentioned below

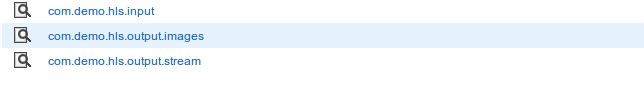

- Create input and output buckets

- Create one input bucket and two output bucket one for the output stream and another for the images that are created along the output stream. These images allow the user to the preview by hovering over video timeline.

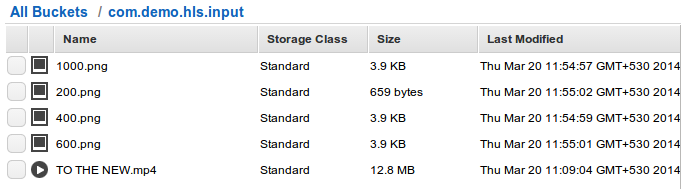

Upload a video to input bucket (com.demo.hls.input)

Upload a video to input bucket (com.demo.hls.input)- Upload small icon which would be used as a watermark on the video to differentiate between different bitrates. Best would be to upload images with text 400,600,1000 and in later steps each of these images would be watermarked on corresponding bitrate video.

Create a process that takes the input from input bucket, processes it and then puts the output to output bucket. For this we’ll be using AWS Elastic Transcoder. Elastic Transcoder would take a video as input, process it to convert it into different stream and would put the final result in the output queue.

Create a process that takes the input from input bucket, processes it and then puts the output to output bucket. For this we’ll be using AWS Elastic Transcoder. Elastic Transcoder would take a video as input, process it to convert it into different stream and would put the final result in the output queue.

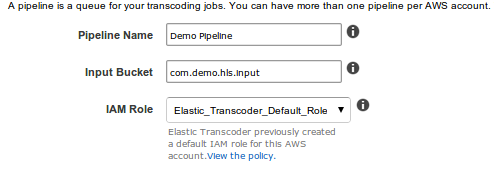

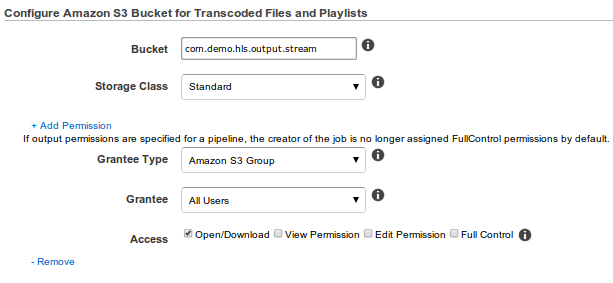

- Create a pipeline in Elastic Transcoder In this step we specify input / output bucket that would be used by Elastic Transcoder and the permissions for the output bucket.

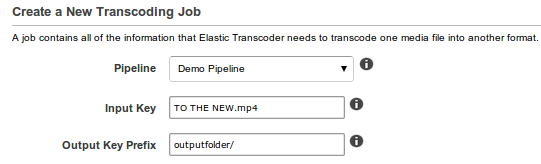

- Create a Job : In this step we specify the following configurations

- Common settings for all format

- Following would be repeated for each format

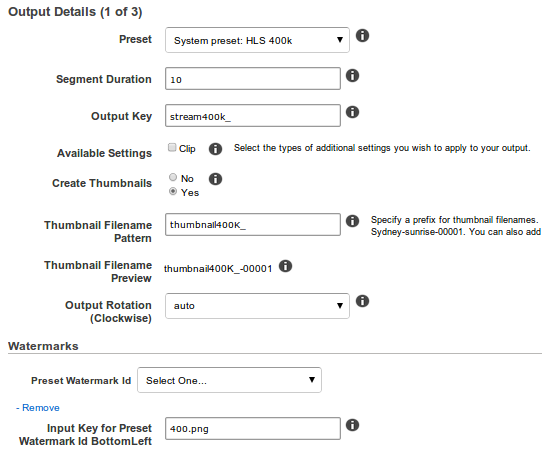

- Preset: Desired format of conversion.

- Segment Duration: Duration of each segment, Apple suggests segment duration of 10 for HLS encoding.

- Output Key: Prefix that would be added to segment.

- Create Thumbnails: Create thumbnails for the video or not.

- Thumbnail Filename Pattern: Naming pattern of the thumbnails (prefix).

- Preset Watermark Id: Location for watermark.

- Input Key for Preset Watermark Id: File in the input input bucket that would be used as watermark image

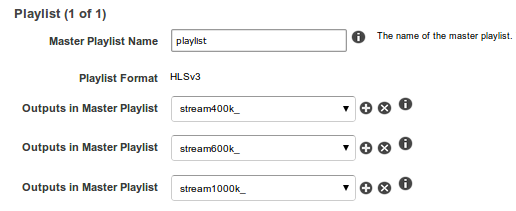

- Playlist Settings

- Create a pipeline in Elastic Transcoder In this step we specify input / output bucket that would be used by Elastic Transcoder and the permissions for the output bucket.

Once job is created it might take some time depending on the size of input video. From output bucket we can pick up the the file with playlist name that we specified in column “Master Playlist Name” and play it in a HLS supported player to see it in action. One such player is http://osmfhls.kutu.ru/ where we can see the output stream adapt to our bandwidth.

Output Images are as follows for my bandwidth it started with 1000K but it adapted itself to 600K

I have tried HLS format, but it is no working for high resolution videos.