AWS Cost Optimization Series | Blog 3 | Leveraging EC2 Container Service (ECS)

Organizations are taking a plunge and leveraging AWS to varied benefits. In this blog, I would be highlighting how we were able to successfully generate 35% cost savings for a client. To know more about the Client and their environment, read through the parent blog of this use case – AWS Cost Optimization Series | Blog 1 | $100K to $40K in 90 Days

Even before deep diving into the details of migration, I would like to shed some light on the pain areas in hosting application on EC2 instances in the client’s scenario.

- Wrong Naming Convention

- Manual deployment of all services in the ecosystem and the deployments for a single service use to take 20-30 minutes depending on the number of instances running for that service.

- We were challenged to add 15+ more services in addition to 35+ existing services. Every service in the ecosystem required at least two EC2 instances for java application with auto scaling. This kind of setup would have required more EC2 instances, resulting in higher operational and maintenance cost. Moreover, in microservices architecture, the services are expected to be highly scalable, which comes at a high cost.

We started our journey to Docker containers with Kubernetes (Google Docker Orchestration Tool), the plan was to test few QA services on K8s and after successful testing migrating entire application stack to K8s. We considered K8s because it is very feature rich and provide some out of the box features like application health checking, rolling updates, horizontal auto scaling, service discovery and much more. But after running K8s for 1 week and testing failover scenarios we faced some problems like:

- In K8s, master manages the entire state of the cluster and to make any changes i.e. deployment or configuration update you need to make API call to master. If you are using K8s in production, you will need to setup HA available master and we found no supporting documentation regarding the same.

- The frequent new release of K8s, which is good but updating cluster in production every 2-3 weeks doesn’t make any sense.

- By default, Kubernetes creates Internet facing ELB on AWS, and we required internal ELBs for accessing services in the VPC.

- Kubernetes pod auto-scaling was available as a beta feature and it requires CPU utilization data from Heapster. In the case of heavy load, we saw pod auto scaling not functioning and debugging Heapster took a couple of days without any success.

After evaluating Kubernetes for almost 2 weeks, we finally dropped the idea of implementing K8s and we started evaluating AWS ECS. The advantages we get with AWS ECS are:

- It integrates well with ELB, EC2, Cloudwatch, Auto Scaling

- Cluster State management is done by ECS, therefore no need to setup any master or implement complex state management protocols like zookeeper or etc.

- ELB health checks takes care of deregistering/registering Docker containers with ELB

In the app stack, we have Node js, Spring Boot, and Tomcat applications and we started with the creation of base Docker images for the above-mentioned stack. The base Docker image included basic tools we require in all the Dockers i.e. AWS CLI, Telnet, Nano and other tools. We then use custom base Docker images in the service build plans to reduce the build time, and it also helps in easily rolling out any changes to the Docker image by simply updating base Docker image.

Initially, we started with AWS ECR as private Docker repository, the only challenge with using AWS ECR was the increased deployment time. As AWS ECR was not available in the Singapore Region at that time, so we opted for self-hosted private Docker registry for storing our Docker images. It uses AWS S3 for storing Docker images and we are using x.503 certificate for client and registry communication.

You can setup ECS cluster in many ways, by default you can setup ECS cluster from ECS console which in turn runs cloud formation template in the backend or you can manually setup auto-scaling group with ECS support or custom AMI and pass cluster name in the user-data of the launch config. If you want to understand how we build Docker image and deploy them on AWS ECS, please refer to “How we Build, Test and Deploy Docker Containers” Build and Deploy Pipeline.

By default, ECS container instance comes up with 50 GB storage for Docker, which was not enough for our use case. Therefore we configured custom base AMI, with 300 GB storage for Docker, 100 GB partition for logs, supervisor (to keep log stash running), log stash and a startup script that downloads latest configuration files from S3.

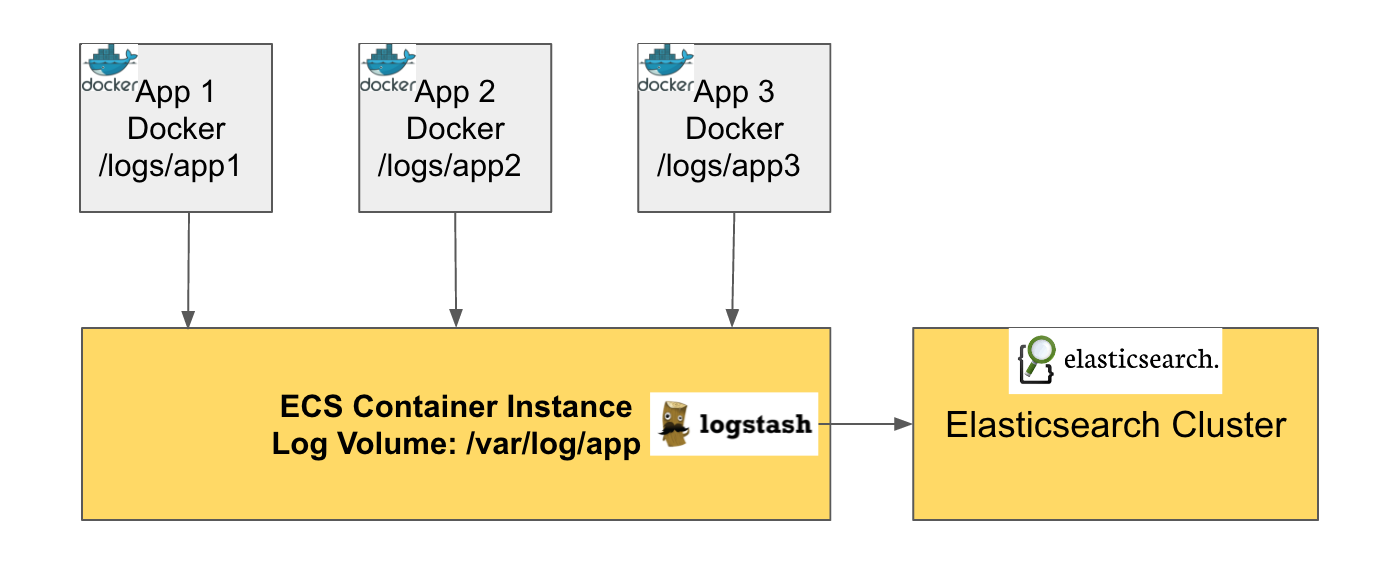

One of the most challenging task in any microservices ecosystem is centralized logging, as it requires highly available and scalable logging system.

- Firstly, before starting the migration to Dockers we made sure all applications in our ecosystem generate JSON logs, because of this we can avoid grokking patterns and directly index logs files to elastic search.

- Secondly, we made sure services Docker container mount specific ECS container instance directory. So that we can have all the logs available in the same directory on all the ECS container instances.

- Logstash reads all the logs files from the above-mentioned directory and index the logs in Elasticsearch.

Challenges faced:

- Few days after complete QA migration to ECS, we saw AWS ECS agent was going down frequently. ECS agent runs on every node in the cluster and it provides information like EC2 and Docker containers info like CPU, Memory, Network etc. If the agent goes down ECS cluster is unaware of resources running on the EC2 container instance. To avoid this we wrote a script that checks agent status if the agent is disconnected, the script restart ECS agent.

- Earlier container auto-scaling was not supported by AWS ECS, so we ended up writing a Lambda function which is triggered by cloud watch alarms. Now AWS ECS offer auto scaling by default but in case you still want to see how we did it check our blog “Using AWS Lambda Function for Auto Scaling of ECS Containers”.

Cost Savings:

By migrating to AWS ECS, we were able to reduce no of EC2 instances required for application hosting by 70% and it resulted in 30% cost savings.

| Description | Time | No. of EC2 Instances |

|---|---|---|

| EC2 Instances for applications | Earlier | 222 |

| EC2 Instances in ECS Cluster | Now | 60 |

Read in the next blog series on “AWS Cost Optimization Series | Blog 4 | Databases Consolidation & Optimization” for the same client.